The State of AI Image Generation in 2026: Trends, Models & What's Next

AI image generation has matured from a fascinating experiment into critical infrastructure for millions of creators, marketers, and businesses. In just three years, the technology has gone from producing obviously artificial images to generating output that professional photographers can't reliably distinguish from their own work. This article is a snapshot of where we are in mid-2026 — the dominant models, the capabilities that seemed impossible a year ago, the limitations that remain, and the trajectory for what comes next.

The Model Landscape

Flux: The Photorealism King

Flux models (developed by Black Forest Labs) have established themselves as the industry standard for photorealistic image generation. The Flux Pro model produces images with remarkable fidelity: accurate skin textures, natural lighting behavior, correct material rendering, and compositional intelligence that consistently produces well-framed, visually compelling results.

What makes Flux particularly dominant in 2026 is its versatility. It handles portraits, landscapes, products, architecture, and abstract concepts with equal competence — something earlier models struggled with, often excelling at one category while producing mediocre results in others.

Flux Schnell (the fast variant) has become the workhorse model for high-volume content creation, sacrificing marginal quality for dramatically faster generation times. For social media content and iterative creative work, the quality tradeoff is imperceptible.

GPT Image 2 — OpenAI's 2025 flagship

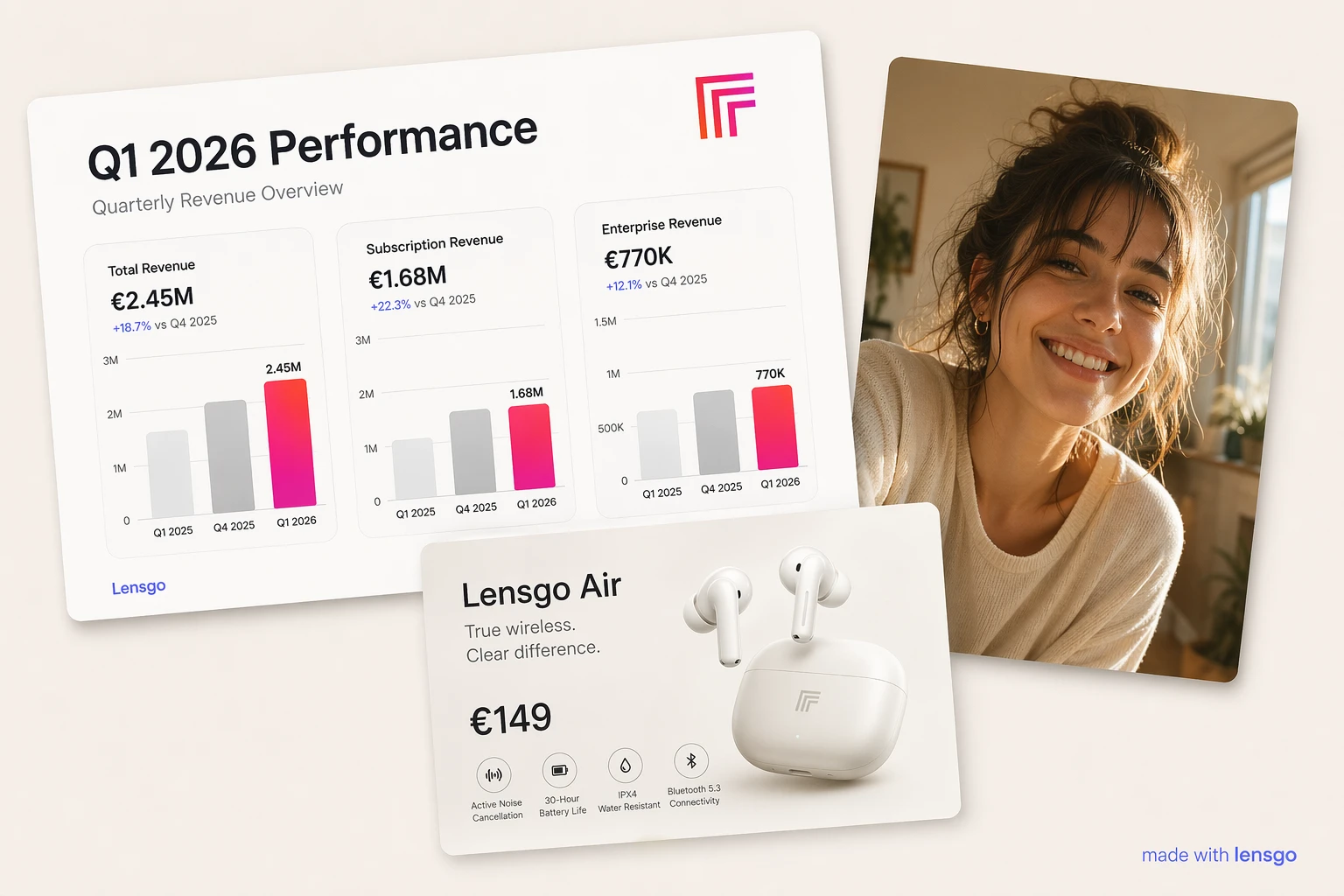

OpenAI's flagship image model in 2026 is GPT Image 2, which succeeded DALL·E 3 and raised the bar on three fronts: dense text rendering (infographics, UI mockups, posters), instruction-following edits that preserve the rest of the scene, and native multi-image composition (up to 10 reference images in a single call). For any workflow where text-in-image accuracy or precise editing matters, GPT Image 2 is now the default choice — we wrote up a full launch breakdown with examples when it landed on Lensgo.

DALL·E 3 is still available for ChatGPT Plus users who want incidental image generation without switching tools, but for production workflows GPT Image 2 is a clear upgrade. See our side-by-side comparison: GPT Image 2 vs DALL·E 3.

Stable Diffusion and Open Source

The open-source community around Stable Diffusion continues to thrive. While the base models may not match the polish of Flux or DALL-E, the ecosystem of fine-tuned models, LoRAs, ControlNets, and community extensions creates a level of customization and control that no proprietary platform can match.

The open-source approach has become essential for enterprise use cases where data privacy, model customization, and deployment control are paramount. Companies that need to generate images on-premises or fine-tune models on proprietary data rely on Stable Diffusion's ecosystem.

Midjourney

Midjourney has maintained its position as the preferred tool for artistic and stylized imagery. Its distinctive aesthetic — slightly painterly, dramatic, and visually rich — has a loyal following among artists, designers, and creative professionals who value the Midjourney "look" over photographic realism.

What's Become Possible in 2026

Consistent Characters

One of the most significant advances of the past year. It's now possible to generate the same character across multiple images with reliable consistency — same face, same build, same clothing. This has unlocked genuine visual storytelling: creators can build narratives across multiple images with the same characters, a capability that was unreliable even twelve months ago.

Native Video Generation

AI video generation has graduated from experimental to practical. Models like Seedance 2.0, Kling, and Wan produce 4-8 second clips with coherent motion, consistent physics, and reasonable temporal stability. While AI video isn't replacing traditional cinematography, it's become a genuine tool for social media content, b-roll footage, and creative projects.

The image-to-video pipeline has become particularly powerful: generate a still image with precise control over composition and style, then animate it with natural motion. This two-step workflow produces results that are more controlled and higher quality than direct text-to-video.

Controllable Composition

The level of compositional control available in 2026 far exceeds what was possible even a year ago. Through techniques like ControlNet, regional prompting, and reference image guidance, creators can specify not just what appears in an image but where it appears, how large it is, and how it relates spatially to other elements. This control transforms AI generation from "I hope it looks right" to "I know exactly what I'll get."

Multi-Modal Integration

The boundaries between text, image, and video generation have blurred. Platforms now offer unified workflows where a text description can generate an image, which can be animated into a video, which can be modified through text feedback — all within a single interface. This convergence has made AI creation feel less like operating separate tools and more like directing a creative production.

What's Still Challenging

Hands and Fine Motor Details

The "AI hands" problem has improved significantly but isn't fully solved. Simple hand poses (resting on a surface, holding a large object) are handled well. Complex hand interactions (intricate finger positioning, multiple hands interacting, holding small objects) still produce occasional artifacts. The improvement trajectory is steep — this limitation will likely be negligible within a year.

Precise Text Rendering

Despite DALL-E's advances, generating images with perfectly accurate text remains inconsistent across most models. Long sentences, small text, and stylized typography still produce errors. For content that requires precise text, the most reliable workflow is generating the image without text and adding text in post-processing.

Complex Physical Interactions

Scenes involving complex physical interactions — multiple people in dynamic poses, objects interacting with realistic physics, complex mechanical systems — can still produce unnatural results. The AI understands composition and aesthetics better than physics, which means static, posed scenes look more convincing than dynamic action scenes.

Long-Form Narrative Consistency

While character consistency within a short series has improved dramatically, maintaining perfect consistency across 50+ images for something like a graphic novel or extended marketing campaign remains challenging. Slight drift in facial features, clothing details, and proportions accumulates over many generations.

The Business Impact

Creator Economy

AI image generation has fundamentally lowered the barrier to visual content creation. Individuals who previously couldn't afford professional photography — small business owners, bloggers, freelancers, educators — now produce marketing-quality visuals at near-zero marginal cost. This democratization has expanded the creator economy and increased the volume and quality of visual content across every platform.

Professional Photography

Contrary to early predictions, professional photography hasn't been replaced — it's been augmented. Photographers use AI for mood boards, creative exploration, and generating composite elements. The demand for authentic photography has actually increased as AI-generated images become ubiquitous, because genuine captured moments carry a premium in an environment where anyone can generate a beautiful image.

Marketing and Advertising

The most dramatic business impact is in marketing. The ability to generate campaign-specific imagery on demand, A/B test visual approaches without production costs, and produce platform-native content at scale has transformed marketing economics. Marketing teams that previously allocated significant portions of their budget to visual production are reallocating those resources to strategy and distribution.

E-Commerce

Product photography — traditionally one of e-commerce's biggest operational costs — has been partially automated. AI generates lifestyle context, background variations, and seasonal styling for products, reducing the need for repeated studio shoots while increasing the volume of visual content per SKU.

What's Coming Next

Real-Time Generation

Generation times are approaching real-time. Within the next year, we expect to see interactive AI image generation where changes to a prompt produce visual updates in under a second. This will transform AI generation from a batch process into an interactive creative tool — more like painting on a canvas than submitting a request and waiting for delivery.

3D and Spatial

The extension of image generation into three-dimensional space is accelerating. Generating 3D objects, environments, and scenes from text descriptions will enable new categories of content creation — virtual staging for real estate, 3D product visualization, and content for spatial computing platforms.

Personalization at Scale

AI-generated imagery tailored to individual viewers — showing a hotel room configured for a family to one visitor and for a couple to another — will become standard in digital marketing. The economics of per-viewer image generation are approaching viability.

Regulatory Framework

The regulatory landscape will crystallize. Disclosure requirements for AI-generated content, clearer intellectual property frameworks, and industry-standard provenance tracking will bring needed clarity to a space that's currently operating in a legal gray area.

Conclusion

AI image generation in 2026 is a mature, capable technology with specific strengths (photorealism, speed, consistency, accessibility), acknowledged limitations (complex interactions, text rendering, long-form consistency), and a clear trajectory toward solving its remaining challenges. For creators and businesses, the question is no longer "should I use AI image generation?" but "how do I integrate it most effectively into my existing workflow?"

The most successful adopters are those who understand AI as a tool — one that amplifies human creativity rather than replacing it. The technology handles production; the strategy, storytelling, and authentic connection remain fundamentally human.

Explore what's possible with AI — start creating with the latest models including GPT Image 2, Flux, and Imagen 4, with free daily credits.